Currently R runs on 32- and 64-bit operating systems, and most 64-bit OSes (including Linux, Solaris, Windows and macOS) can run either 32- or 64-bit builds of R. The memory limits depends mainly on the build, but for a 32-bit build of R on Windows they also depend on the underlying OS version. From release 1.3.1 R has partial support for AppleScripts. This means two things: you can run applescripts from inside R using the command applescript() (see the corresponing help) or you can ask R to run commands from and applescript. The directory 'scripts' in the main R folder contains two examples of applescripts.

Memory A solid understanding of R’s memory management will help you predict how much memory you’ll need for a given task and help you to make the most of the memory you have. It can even help you write faster code because accidental copies are a major cause of slow code. The goal of this chapter is to help you understand the basics of memory management in R, moving from individual objects to functions to larger blocks of code. Along the way, you’ll learn about some common myths, such as that you need to call gc to free up memory, or that for loops are always slow.

Outline. shows you how to use objectsize to see how much memory an object occupies, and uses that as a launching point to improve your understanding of how R objects are stored in memory. introduces you to the memused and memchange functions that will help you understand how R allocates and frees memory. shows you how to use the lineprof package to understand how memory is allocated and released in larger code blocks. introduces you to the address and refs functions so that you can understand when R modifies in place and when R modifies a copy. Understanding when objects are copied is very important for writing efficient R code. Prerequisites In this chapter, we’ll use tools from the pryr and lineprof packages to understand memory usage, and a sample dataset from ggplot2.

If you don’t already have them, run this code to get the packages you need. Install.packages( 'ggplot2') install.packages( 'pryr') install.packages( 'devtools') devtools:: installgithub( 'hadley/lineprof') Sources The details of R’s memory management are not documented in a single place. Most of the information in this chapter was gleaned from a close reading of the documentation (particularly?Memory and?gc), the section of R-exts, and the section of R-ints. The rest I figured out by reading the C source code, performing small experiments, and asking questions on R-devel. Any mistakes are entirely mine.

Object size To understand memory usage in R, we will start with pryr::objectsize. This function tells you how many bytes of memory an object occupies. Objectsize(mtcars) ## 6.74 kB (This function is better than the built-in object.size because it accounts for shared elements within an object and includes the size of environments.) Something interesting occurs if we use objectsize to systematically explore the size of an integer vector.

The code below computes and plots the memory usage of integer vectors ranging in length from 0 to 50 elements. You might expect that the size of an empty vector would be zero and that memory usage would grow proportionately with length. Neither of those things are true! Objectsize( list) ## 40 B Those 40 bytes are used to store four components possessed by every object in R:.

Object metadata (4 bytes). These metadata store the base type (e.g. integer) and information used for debugging and memory management. Two pointers: one to the next object in memory and one to the previous object (2.

8 bytes). This doubly-linked list makes it easy for internal R code to loop through every object in memory. A pointer to the attributes (8 bytes).

All vectors have three additional components:. The length of the vector (4 bytes).

By using only 4 bytes, you might expect that R could only support vectors up to 2 4 × 8 − 1 ( 2 31, about two billion) elements. But in R 3.0.0 and later, you can actually have vectors up to 2 52 elements. To see how support for long vectors was added without having to change the size of this field. The “true” length of the vector (4 bytes). This is basically never used, except when the object is the hash table used for an environment. In that case, the true length represents the allocated space, and the length represents the space currently used.

The data (?? An empty vector has 0 bytes of data. Numeric vectors occupy 8 bytes for every element, integer vectors 4, and complex vectors 16. If you’re keeping count you’ll notice that this only adds up to 36 bytes. The remaining 4 bytes are used for padding so that each component starts on an 8 byte (= 64-bit) boundary. Most cpu architectures require pointers to be aligned in this way, and even if they don’t require it, accessing non-aligned pointers tends to be rather slow.

(If you’re interested, you can read more about it in.) This explains the intercept on the graph. But why does the memory size grow irregularly? To understand why, you need to know a little bit about how R requests memory from the operating system. Requesting memory (with malloc) is a relatively expensive operation. Having to request memory every time a small vector is created would slow R down considerably. Instead, R asks for a big block of memory and then manages that block itself.

This block is called the small vector pool and is used for vectors less than 128 bytes long. For efficiency and simplicity, it only allocates vectors that are 8, 16, 32, 48, 64, or 128 bytes long. If we adjust our previous plot to remove the 40 bytes of overhead, we can see that those values correspond to the jumps in memory use.

Plot( 0: 50, sizes - 40, xlab = 'Length', ylab = 'Bytes excluding overhead', type = 'n') abline( h = 0, col = 'grey80') abline( h = c( 8, 16, 32, 48, 64, 128), col = 'grey80') abline( a = 0, b = 4, col = 'grey90', lwd = 4) lines(sizes - 40, type = 's') Beyond 128 bytes, it no longer makes sense for R to manage vectors. After all, allocating big chunks of memory is something that operating systems are very good at. Beyond 128 bytes, R will ask for memory in multiples of 8 bytes. This ensures good alignment.

A subtlety of the size of an object is that components can be shared across multiple objects. For example, look at the following code. Objectsize( rep( 'banana', 10)) ## 216 B Exercises. Repeat the analysis above for numeric, logical, and complex vectors. If a data frame has one million rows, and three variables (two numeric, and one integer), how much space will it take up? Work it out from theory, then verify your work by creating a data frame and measuring its size. Compare the sizes of the elements in the following two lists.

Each contains basically the same data, but one contains vectors of small strings while the other contains a single long string. Library(pryr) memused ## 45 MB This number won’t agree with the amount of memory reported by your operating system for a number of reasons:. It only includes objects created by R, not the R interpreter itself.

Both R and the operating system are lazy: they won’t reclaim memory until it’s actually needed. R might be holding on to memory because the OS hasn’t yet asked for it back. R counts the memory occupied by objects but there may be gaps due to deleted objects. This problem is known as memory fragmentation. Memchange builds on top of memused to tell you how memory changes during code execution. Positive numbers represent an increase in the memory used by R, and negative numbers represent a decrease. # Now nothing points to it and the memory can be freed memchange( rm(y)) ## -4 MB Despite what you might have read elsewhere, there’s never any need to call gc yourself.

R will automatically run garbage collection whenever it needs more space; if you want to see when that is, call gcinfo(TRUE). The only reason you might want to call gc is to ask R to return memory to the operating system. However, even that might not have any effect: older versions of Windows had no way for a program to return memory to the OS. GC takes care of releasing objects that are no longer used. However, you do need to be aware of possible memory leaks. A memory leak occurs when you keep pointing to an object without realising it. In R, the two main causes of memory leaks are formulas and closures because they both capture the enclosing environment.

The following code illustrates the problem. In f1, 1:1e6 is only referenced inside the function, so when the function completes the memory is returned and the net memory change is 0. F2 and f3 both return objects that capture environments, so that x is not freed when the function completes.

Objectsize(z) ## 4.01 MB Memory profiling with lineprof memchange captures the net change in memory when running a block of code. Sometimes, however, we may want to measure incremental change. One way to do this is to use memory profiling to capture usage every few milliseconds. This functionality is provided by utils::Rprof but it doesn’t provide a very useful display of the results. Instead we’ll use the package. It is powered by Rprof, but displays the results in a more informative manner. To demonstrate lineprof, we’re going to explore a bare-bones implementation of read.delim with only three arguments.

Library(lineprof) source( 'code/read-delim.R') prof. X ' x 5 0x7feeaaa1c768: For interactive use, tracemem is slightly more useful than refs, but because it just prints a message, it’s harder to program with. I don’t use it in this book because it interacts poorly with, the tool I use to interleave text and code. Non-primitive functions that touch the object always increment the ref count. Primitive functions usually don’t. (The reasons are a little complicated, but see the R-devel thread.).

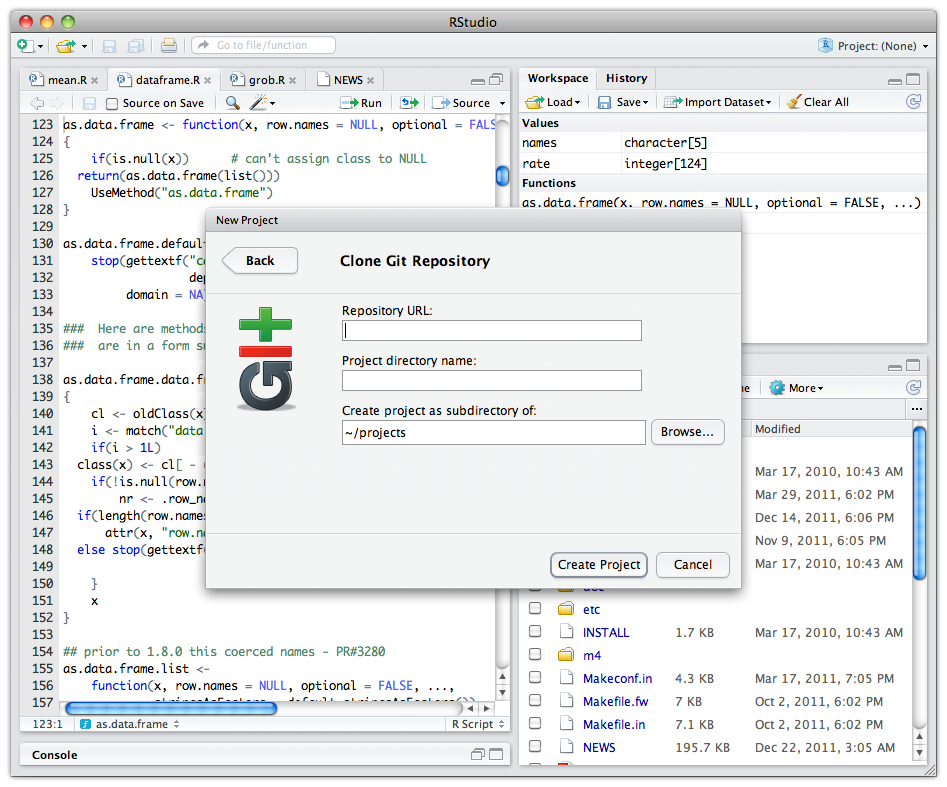

R-Studio 8.9 Crack Full For Mac & Android with Keygen Free Download! R-Studio Crack is the software that can recover data. It is a very robust software. And it supports Windows, Mac OS, and Linux. So, it is also known as cross-platform software.

It is a small software. But it still has a lot of power. Since it supports all major file systems. So, you can use it on all devices. And the software is also able to get back large files. The whole process is very fast. And you will get your data back in a very short time.

And with this software, the success rate is higher than other software of this kind. So, this is the reason that people trust R-Studio Portable.

And they prefer it over the other software. R-Studio Portable A lot of issues can cause data loss.

Such as you try to install a new copy of OS in your device. And in this process, you delete all your data by mistake. But R-Studio Registration Key can help in this reason. As well as it can help in many other reasons. Such as you lost the data because of a virus attack.

Or your system crashed. And all your data is gone. So, no matter what the reason is.

Our software can solve your problem. And you get all your data back.

The software also supports big files. As well as you can use it for RAW files. So, all these features help you to always get your data back. Since R-Studio has a lot of features so you can call R-Studio Serial a feature-rich software. R-Studio Crack Since the software supports all file systems.

So, you can use R-Studio Torrent to get your data back to form all devices. It supports NTFS, FAT, HFS, HFS+, EXT2FS and many more. Hence you can use the software on all know file systems. And it is not only the software to get data back. But it is also a software to wipe your disks. Since the OS cannot delete files in a secure way.

Because people can restore data.so, you need to be sure that the data is gone forever. So, you should use R-Studio for Mac to delete data if you want to change your disk. And when all the data is gone forever. You can stay worry-free. So, because of all of its features. You can rely on it.

You can also download here. Main Features: File Systems: There are a lot of file systems. And each OS supports one or more of them.

Since R-Studio Keygen supports all file systems. So you can use our software to get your data back from any file system. Fast Process: The scan process is very fast. As well as the restore process is also very fast. So, with R-Studio Key you can get your data back in a very short time.

Success Rate: Since it is very hard to find the lost data. So, a lot of software cannot find all the data.

But R Studio Android is a high success rate. So, you can always get your data back. Safe Delete: There are times when you have to replace your disks with a new one. But the OS cannot delete data in a secure way. And people can recover from it. So, you should use R-Studio Crack to delete the data in a secure way. Hence no one can restore the data.

What’s new in R-Studio 8.9 Build 173589 Crack?. Large file system support. Get back your data from all devices. Supports all devices and OS.

Bad sector of any other reason for data loss is covered. Wipe your disks in a secure way. Find your lost data in a very short time. System Requirements:. MS Windows XP, Vista, 7, 8, 10. Mac OS X 10.7 or above. 128 MB RAM.

200 MB free disk space. Enough free Disk space equal to the file size that you want to back. How to install R-Studio Crack?. Use the link below to download R-Studio Crack version. Now extract the files and install the setup. Once the install process is Close the program so, you can start the crack process. Now use the R-Studio Crack files to unlock the full.

All Done. R-Studio Full version is ready to use. R-Studio Crack Full For Mac.